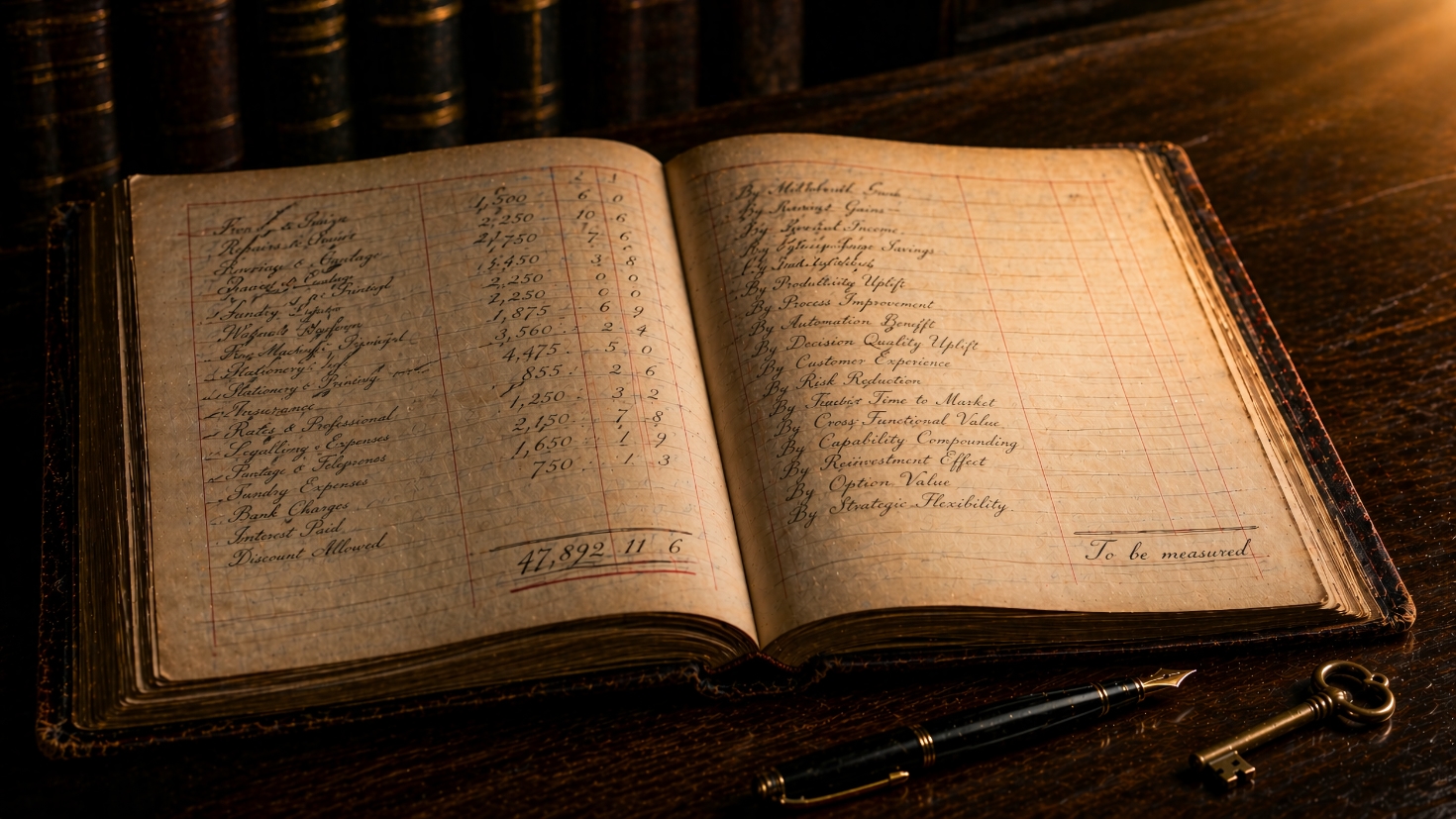

The Appreciating Ledger: When AI Capital Outgrows the CFO's Rulebook

The clearest signal in Bain’s April 2026 CFO Survey is that CFOs’ satisfaction with AI outcomes tracks their AI adoption curve. 31% of CFOs are satisfied with their AI outcomes overall, increasing to 41% among those who have already scaled AI into production, and to over 60% in the top quartile of AI maturity.

The same pattern appears in the organisation-level data. PwC’s 2026 AI Performance Study, drawing on interviews with 1,217 senior executives, finds that 74% of AI’s economic value is captured by 20% of organisations. BCG’s Widening AI Value Gap research, based on responses from 1,250 executives across nine industries, reports that the 5% of organisations it classifies as “future-built” expect roughly twice the revenue growth and 40% greater cost reductions than laggards in the areas where they apply AI. Those same organisations allocate 64% more of their IT budget to AI and reinvest their returns into further capability — a concentration only possible where value is accumulating.

The capital is moving behind the pattern. Grant Thornton’s Q1 2026 CFO Survey reports that 68% of CFOs expect IT and digital spend to rise over the coming year, the highest figure in the survey’s 21-quarter history. Bain’s own data tells the same story at CFO level: 83% of CFOs plan to increase enterprise-wide AI spend by more than 15% over the next two years, with 42% planning increases of 30% or more. Investment is flowing, the leader-laggard spread is widening, and the CFOs at the top of the Bain curve are the leading edge of a separation that three other data sources are now measuring independently.

This is the AI Stages of Adoption rendered at the level of the finance function: different organisations are at different stages, and the satisfaction data tracks that reality. The finance function is not behind the curve. It is standing at the precise point where the profession’s instruments are about to evolve. This article diagnoses why the conventional ledger under-reads AI value, describes how the scorecard can be extended, and sets out the practical moves available now.

Why the conventional ledger under-reads AI value

AI investment behaves in ways the finance profession’s standard instruments were not designed to register. Three characteristics matter.

First, AI assets appreciate rather than depreciate through use. Models improve as they accumulate interaction data, processes reorganised around AI gain efficiency as staff adapt to them, and governance frameworks built for one application become infrastructure for the next. Conventional depreciation schedules, calibrated to physical assets that wear out, miss this entirely: a fine-tuned model is often worth more in year three than in year one, which is the opposite of the pattern the ledger is built to record.

Second, returns accumulate through reinvestment rather than accrue linearly. An AI initiative that releases capacity in one function funds the next initiative in another, and the governance work done for the first high-risk use case becomes the platform for the next five. BCG’s future-built data shows this reinvestment pattern empirically: the leading organisations allocate 64% more of their IT budget to AI, and the accumulation shows up in their three-year performance. Standard ROI calculations, which stop at the attributable benefit of a single project, do not observe the reinvestment multiplier.

Third, value crosses organisational boundaries. The data-preparation work done for a customer-service pilot becomes the foundation for a pricing model in a different business unit, the prompt library developed for one team is reused by another, and the skills built by early adopters diffuse through the organisation. Project-level ROI, designed to attribute returns back to the initiative that generated them, cannot see value that surfaces elsewhere. This is the Scaling and Synergy potential that the conventional ledger was not designed to capture, and the same under-reading appears on the cost side: the True Investment Profile systematically undercounts data preparation, retraining cycles, and governance overhead. The ledger understates both the asset and the work that produces it, and the pattern does not stop at the organisation’s boundary.

The Brookings Institution’s January 2026 blueprint describes a J-curve in AI investment at the national level: expenditure and organisational adjustment arrive first and depress measured productivity, and output gains materialise only later, after firms have redesigned workflows, retrained staff, and integrated AI into decision processes. The paper argues that national accounts still record AI as ordinary operating cost rather than investment — the same error made by project-level ROI inside the organisation.

The international accounting framework already permits the treatment AI capital requires. IAS 38 sets four tests for intangible assets: identifiability, control, measurability, and future economic benefit. Modern AI systems (trained models, curated datasets, prompt libraries, evaluation frameworks) meet all four. The rules permit this treatment; corporate practice has not yet caught up. The Financial Accounting Standards Board’s September 2025 update, ASU 2025-06, adjusted the internal-use software capitalisation framework for fiscal years ending after 15 December 2027, and further movement is likely. The CFO who treats AI as capital is not arguing against the accounting profession. They are arguing alongside it.

Extending the scorecard

Bain’s finding is that CFOs who have scaled AI identify speed as their biggest AI win, even though cost and efficiency were their original objectives. The implication is that the scorecard should track time-to-insight, time-to-action, days-to-close, forecast refresh cadence, and time-to-variance resolution with the same rigour as cost. When speed becomes the headline metric, the AI value that a slower organisation would have missed becomes legible. Credit where it is due: this is Bain’s argument, and it is a concrete first move for any CFO building a more honest scorecard.

The broader move is to extend the scorecard across four indicator types rather than relying on any single category. In earlier work I have set out why Boards making major decisions benefit from combining lagging indicators of confirmed past outcomes (cost reductions, time saved, revenue attributed), leading indicators of early signals (decision velocity, process adherence, capability adoption rates), predictive indicators of future value (AI-modelled forecasts of where returns will emerge next), and reasoned indicators derived from automated reasoning or formal verification that prove a condition holds or does not hold. The same combination applies to the CFO’s scorecard, and the reasoned category matters particularly for finance: automated reasoning can prove that a compliance condition is met, that a financial control has not been breached, or that a regulatory threshold has been maintained, producing outputs that carry a different kind of certainty from probabilistic prediction. The scorecard then moves from retrospective accounting toward forward-looking decision instrumentation.

The harder shift is to treat capability as an asset class rather than an expense line. The work that produces returns over time (the data cleanup, the governance framework, the prompt library, the trained workforce, and the vendor relationships) currently appears in the ledger as expense. A parallel view that treats these as capital contributions to an organisational capability is defensible within IAS 38. Within the prevailing culture of expense treatment it requires deliberate intervention.

The next shift is from project discipline to portfolio discipline. Gartner’s 2026 guidance, reported via CFO.com, is that CFOs should evaluate AI spend as a portfolio of distinct use cases with different timelines, risks, and metrics, not through a single ROI formula. A balanced portfolio maintains a distribution across quick wins that fund reinvestment, capability builds that create the infrastructure future initiatives depend on, and transformative initiatives that deliver long-horizon value. Imposing uniform project discipline across that distribution collapses it into a single category and loses the accumulation mechanism.

Reinvestment itself is the final move. BCG’s future-built organisations reinvest AI-generated returns into further AI capability at a rate 64% higher than laggards, which is not accidental but a finance-function decision. The default can be set so that productivity gains from AI are reinvested in the next capability rather than absorbed into general operating margin. Without that default, the accumulation mechanism has nothing to feed.

The instruments already exist. The task is adoption, not invention.

The practical move

The fastest move available to the finance function is to add three categories to the existing AI investment scorecard: time-to-insight metrics that capture speed, cross-functional value attribution that captures the accumulation mechanism, and capability accumulation that captures the emerging asset class. None of these require regulatory change. They require a decision about what gets tracked.

Separating the portfolio comes next. Splitting AI spend into its three categories and applying different evaluation criteria to each allows the accumulating assets to become visible. Quick wins carry conventional ROI discipline, capability builds are evaluated on platform reuse and enabling value, and transformative initiatives are evaluated on option value and strategic positioning. The single-ROI-formula model is the one to retire: a portfolio measured as though every component were a quick win cannot surface the accumulating assets hidden inside it.

Establishing the reinvestment default is a governance decision as much as a measurement one. The explicit default should be that a measurable portion of AI-generated savings is reinvested into further AI capability rather than absorbed into general operating margin. Without this, the accumulation mechanism has no fuel. The CFO is well placed to set this default, and well placed to hold the organisation to it.

Early audit committee engagement shapes a conversation that is about to become routine, because the movement in international accounting standards is real and ongoing. The CFO who briefs their audit committee now on the IAS 38 criteria and the live debate about AI capital treatment enters the reporting-cycle conversation as an architect rather than as a respondent: early engagement shapes the agenda, late engagement inherits it.

None of this moves in isolation. Treating intangibles as capital carries historical associations with balance-sheet optics, and CFOs are professionally punished more severely for write-downs than rewarded for unrecorded value, so the conservative default has rational roots. Standards movement from the IASB and the FASB, and positioning from the Big Four audit firms, will shape the pace at which any individual finance function can move — which is why engagement with those bodies, and with the audit committee as their counterpart inside the organisation, matters more than solo conviction. The CFO leading this evolution is not contradicting those constraints; they are entering the conversation earlier than peers who wait for the standards to arrive.

The invisible asset must be measured directly on the internal scorecard even while the external financial statements continue to treat it as expense. The capability that AI investment is building (the data, the governance, the skills, the prompt libraries, the vendor relationships) should appear in some form on the management report, because measurement begins inside the organisation and reporting convention follows.

The conversation that is about to become standard

The CFO’s role in this evolution is architect, not gatekeeper. The shift from measuring AI as a project to measuring AI as capital is already underway at the leading edge of the profession, and the opportunity for the rest is to build it now, before the pattern becomes expected.

The mechanism is accumulating, and it will keep accumulating. Bain’s satisfaction gradient, PwC’s concentration finding, and BCG’s widening leader-laggard gap all point the same way. Organisations whose finance functions measure the accumulating mechanism are pulling ahead. Organisations whose finance functions still measure the project are not.

The Four Indicator Types, the True Investment Profile, the Scaling and Synergy dimension, and the portfolio approach to AI investment are all available to any finance function willing to adopt them.

The CFO who leads this evolution converts the AI investment conversation from one about cost justification to one about capital accumulation. That is a different conversation in a different register. It is also the conversation that is about to become standard.

Let's Continue the Conversation

Thank you for reading about the instruments the finance function needs to see AI capital clearly. I'd welcome hearing about your organisation's experience with the CFO's AI measurement challenge - whether you're extending the scorecard to capture speed and cross-functional value alongside cost, wrestling with how to treat AI capability as an accumulating asset rather than a recurring expense, or finding ways to build the reinvestment default that keeps the mechanism alive.